The material and so the look of the still white and boring triangles is done with triplanar texturing. First, this article shows the core blending of three textures and then, it extends it with normal mapping for a relief like look with more structure. The given code here are CG-Shaders which are platform independent.

Triplanar Texturing

The main problem is mapping the vertex coordinates to UV-coordinates on a texture. On classic terrain, the problem is often solved with planar mapping. This means, that the texture is coming from above on the terrain like a blanket and stretched until it fits. It’s a one liner in the fragment shader:

float2 coord = position.xz * scale; float4 col1 = tex2D(texPlanarSampler, coord);

scale is a factor which determines how often the texture is repeated all over the terrain.

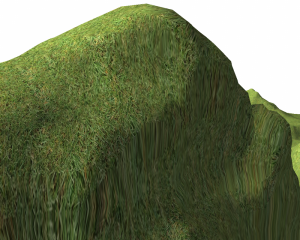

But for volume terrain, the stretchings are not acceptable anymore as figure 1 shows

Here comes triplanar texture mapping into the game. Basically, it is mapping three different textures from above, from the front and from the side. How much each of the textures are chosen for the final color is controlled by the normal. If the normal at the position points mostly upwards then the texture from above has the main part. In the in between areas, the three textures are smoothly blended together.

The basics are presented by Geiss [Gei07] and they are extended by a few more control parameters by Lengyel [Len10, P. 48].

First, the blend factors are calculated from the normal:

is the current normal, m controls the transitions speed between the three textures and

is a plateau on which small components of the normal have no influence. Now, those factors gets normalized:

With this, the final color is blended together from the three texture colors:

,

and

are fetched from the three planar texture projections:

Or spoken in CG:

float3 blendWeights = abs(unitNormal); blendWeights = blendWeights - plateauSize; blendWeights = pow(max(blendWeights, 0), transitionSpeed); float2 coord1 = (position.yz + nLength) * texScale; float2 coord2 = (position.zx + nLength) * texScale; float2 coord3 = (position.xy + nLength) * texScale; float4 col1 = tex2D(texFromX, coord1); float4 col2 = tex2D(texFromY, coord2); float4 col3 = tex2D(texFromZ, coord3); float4 textColour = float4(col1.xyz * blendWeights.x + col2.xyz * blendWeights.y + col3.xyz * blendWeights.z, 1);

Specular Mapping

Specular mapping extends the normal specular lighting by the fact, that specular highlights are not found on all places of an object. The metal button on the west has specular lighting, not the cloth.

The information where to highlight something and where not can be stored in the texture in a single color channel as factor of the normal specular light calculation. This factor is already stored in the blended alpha channel of the color.

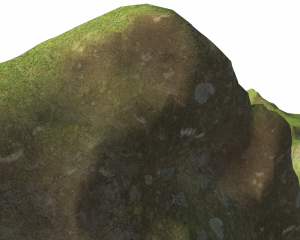

Figure 2 shows the result so far.

Normal Mapping

This already looks nice, but some simulated relief via normal mapping will improve the look. With normal mapping, the normals of a fragment are modified and so the lighting gets influenced. This way, a relief appears [NFHS11, P. 339 – 341].

First, the color fetched from the normal map texture needs to be converted from the range 0-1 to a proper normal:

And again, the three normal map vectors are blended together by the triplanar factors:

And now some theory: The fetched normals are in a space different to the one of the light sources and objects. So one space must be transformed into another. Here, it’s the view direction defined by normalized difference between the camera and the fragment. The fragment has a tangent , a bitangent

and its normal

. Together, they define it’s orientation matrix. With the transposed one, the fragment is brought into the texture space of the normal mapping normals [NFHS11, P. 345 – 347].

Bitagent and tagent can be calculated. First the bitagent with :

And the final tangent:

Putting it again in CG, where normal2 will be used for all lighting calculations from there on:

float3 expand(float3 v)

{

return (v - 0.5) * 2;

}

float3 tangent = float3(1, 0, 0);

float3 binormal = normalize(cross(tangent, unitNormal));

tangent = normalize(cross(unitNormal, binormal));

float3x3 TBN = float3x3(tangent, binormal, unitNormal);

float3 eyeDir2 = normalize(mul(TBN, eyeDir));

float3 bumpFetch1 = expand(tex2D(texFromXNormal, coord1).rgb);

float3 bumpFetch2 = expand(tex2D(texFromYNormal, coord2).rgb);

float3 bumpFetch3 = expand(tex2D(texFromZNormal, coord3).rgb);

float3 normal2 = bumpFetch1.xyz * blendWeights.x +

bumpFetch2.xyz * blendWeights.y +

bumpFetch3.xyz * blendWeights.z;

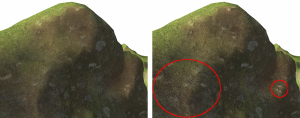

And this is the final result: